Project Info

001

6 months

Role

Lead Product Designer

Team

Zhiyuan Chen (Lead Product Designer)

Tina Chen (Product Designer)

Shivang Gupta (Head of Product)

Bill Guo (Product Manager)

Zach Levonian (ML Engineer)

Responsibility

Led the design effort from scratch to implementation, through user research, synthesis, conceptualization, prioritization, rapid prototyping, wireframing, interaction design and testing.

Company Info

002

Who is PLUS?

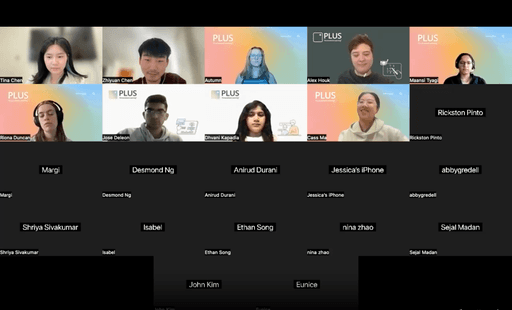

Led by Carnegie Mellon University and Stanford University, PLUS is a tutoring platform that combines human and AI tutoring to bridge opportunity gaps in math education.

3000 +

Middle School Students

500 +

Math Tutors

3000 +

Tutoring Hours Per Week

Case Summary

003

Problem: In a race against time, tutors struggle to make math stick and engage students

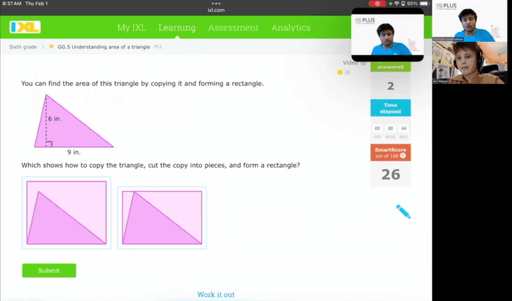

Tutors at PLUS conduct 30-minute sessions with about 5 students, giving them only 6 minutes per student on average. At the same time, they often struggle to explain math concepts clearly and efficiently, or to keep students engaged. In this race against time, tutors must maximize both clarity and engagement to ensure each student grasps the material and feel more motivated.

Requirement: Embracing the trend of AI

Following the wave of Al, the Head of Product wants us to design an LLM-powered solution to help tutors with this issue.

Solution: Empowering tutors with a co-pilot

We developed an LLM-powered co-pilot to assist tutors clearly explain math problems, provide effective encouragement, and ask strategic leading questions, ensuring they make the most of their limited time with each student to enhance engagement and learning outcomes.

Impact: Improving session efficiency

300 +

MAU

20%

decrease in time spent explaining math concepts

38%

increase in student engagement

End Results

004

Users can review the steps first and adjust the number of steps if they feel too detailed or too brief

User can expand each step to view the encouragement and leading questions

Detailed Process

Research

001

Leading the effort to identify user pain points

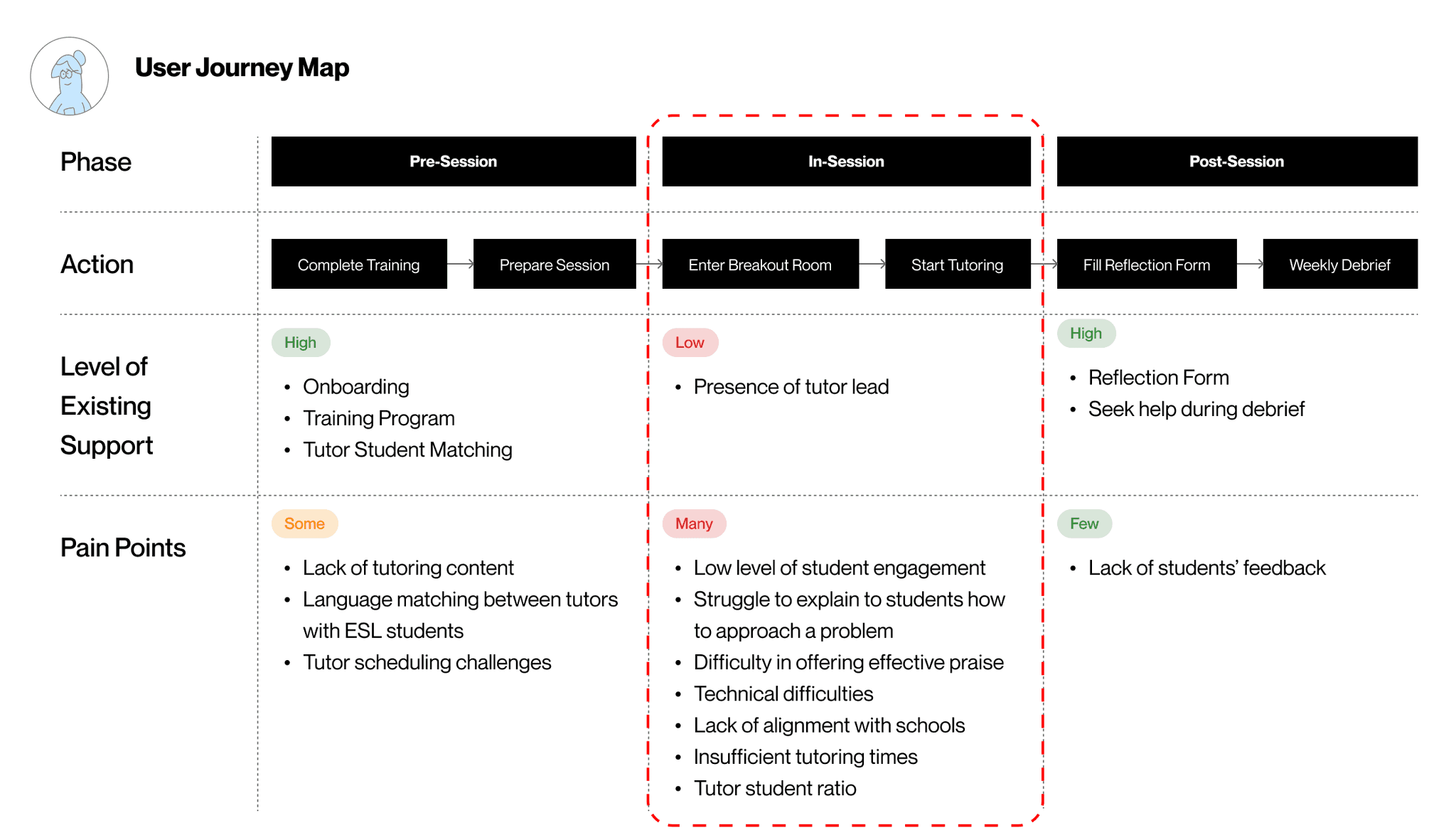

12 Video Analysis

Understand session structure

Observe tutor and student behaviors and interaction patterns

Identify challenges and frictions

2 Focus Group Interviews

Understand tutors' pain points, including when they occur, their frequency, and severity

Understand how they manage challenges and the support available to tutors

After synthesizing the result into a user journey map, I decided to focus on the in-session phase because the inadequate existing support and the numerous pain points highlight a significant opportunity for intervention.

Mapping user pain points and the level of existing support onto various phases

Ideation

002

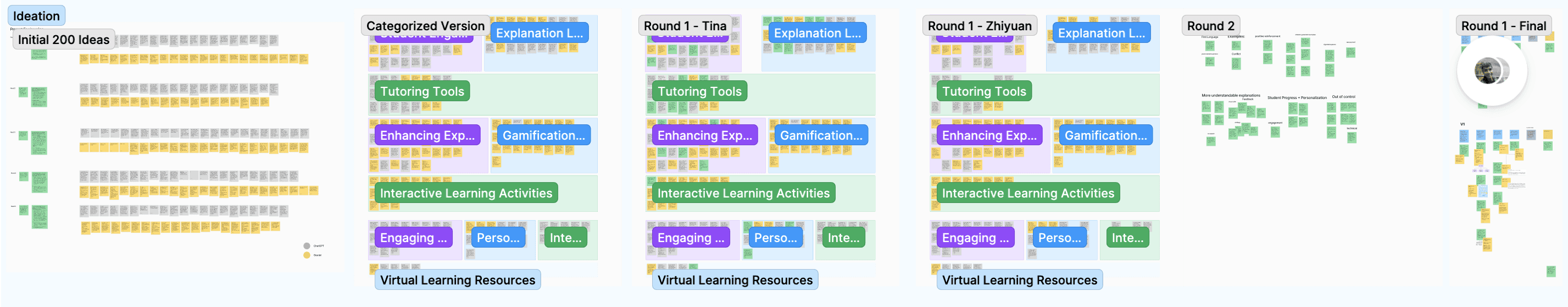

From 0 to 200: A wild exploration with AI-driven brainstorming

To quickly generate a wide range of creative ideas, I decided to leverage AI-driven brainstorming.

After going through 3 iterations in prompt engineering where I simultaneously evaluate input and output to find the most effective prompt that lead to most reliable ideas, I successfully generated over 200 ideas within just 1 hour.

Synthesis

003

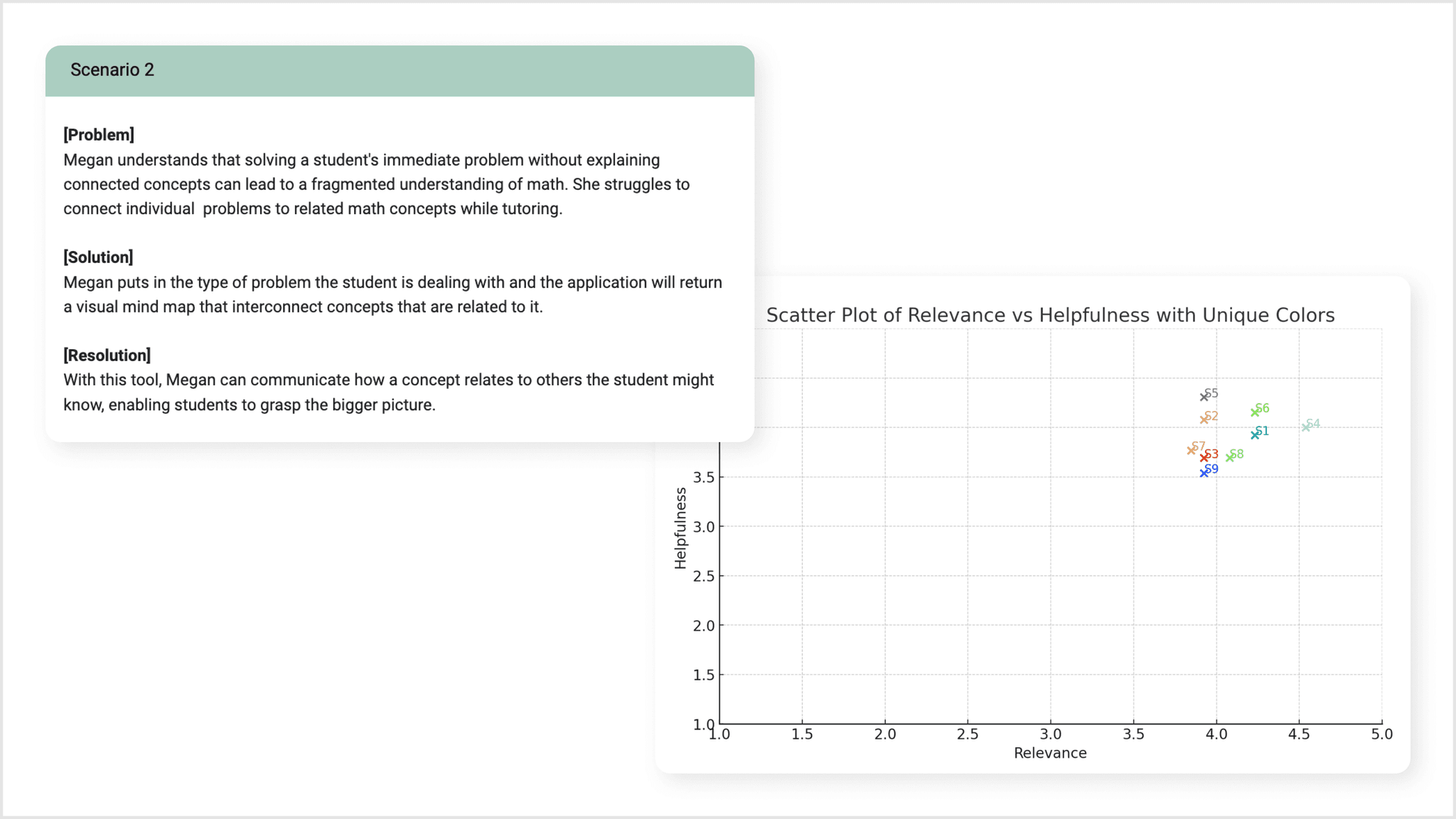

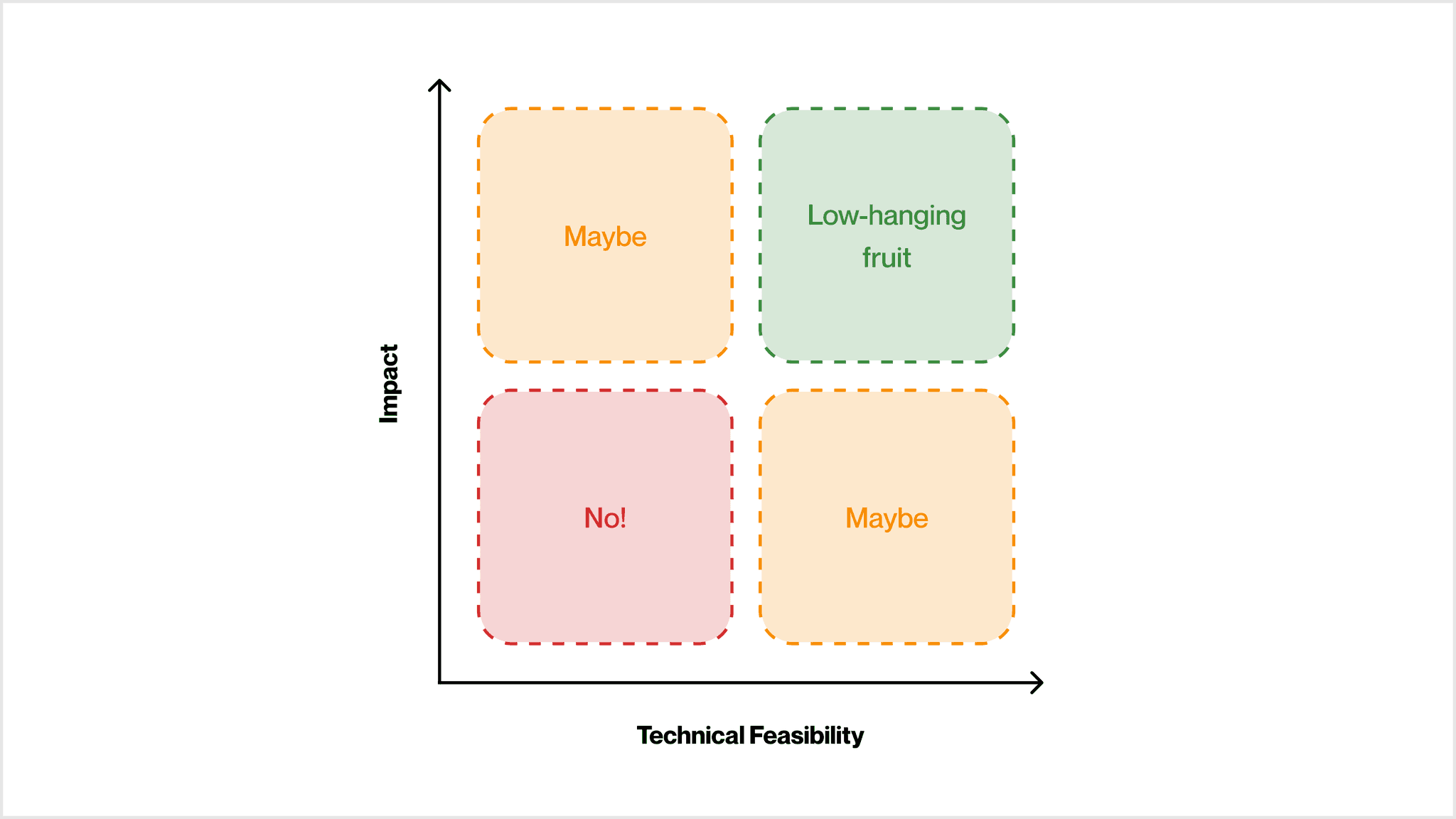

From 200 to 2: Identifying a deliverable MVP

After several rounds of initial filtering, we trimmed our potential ideas from 200 to 10. I then used multi-method approaches to seek input from different stakeholders to further narrow down ideas and eventually landed on 2 solutions that are high-impact and low-effort.

➡️ Solution I

A step-by-step guide to math problems for tutors to provide explanations effeciently

➡️ Solution II

Strategic leading questions for tutors to ask students instead of offering answers directly

Rapid Prototyping

004

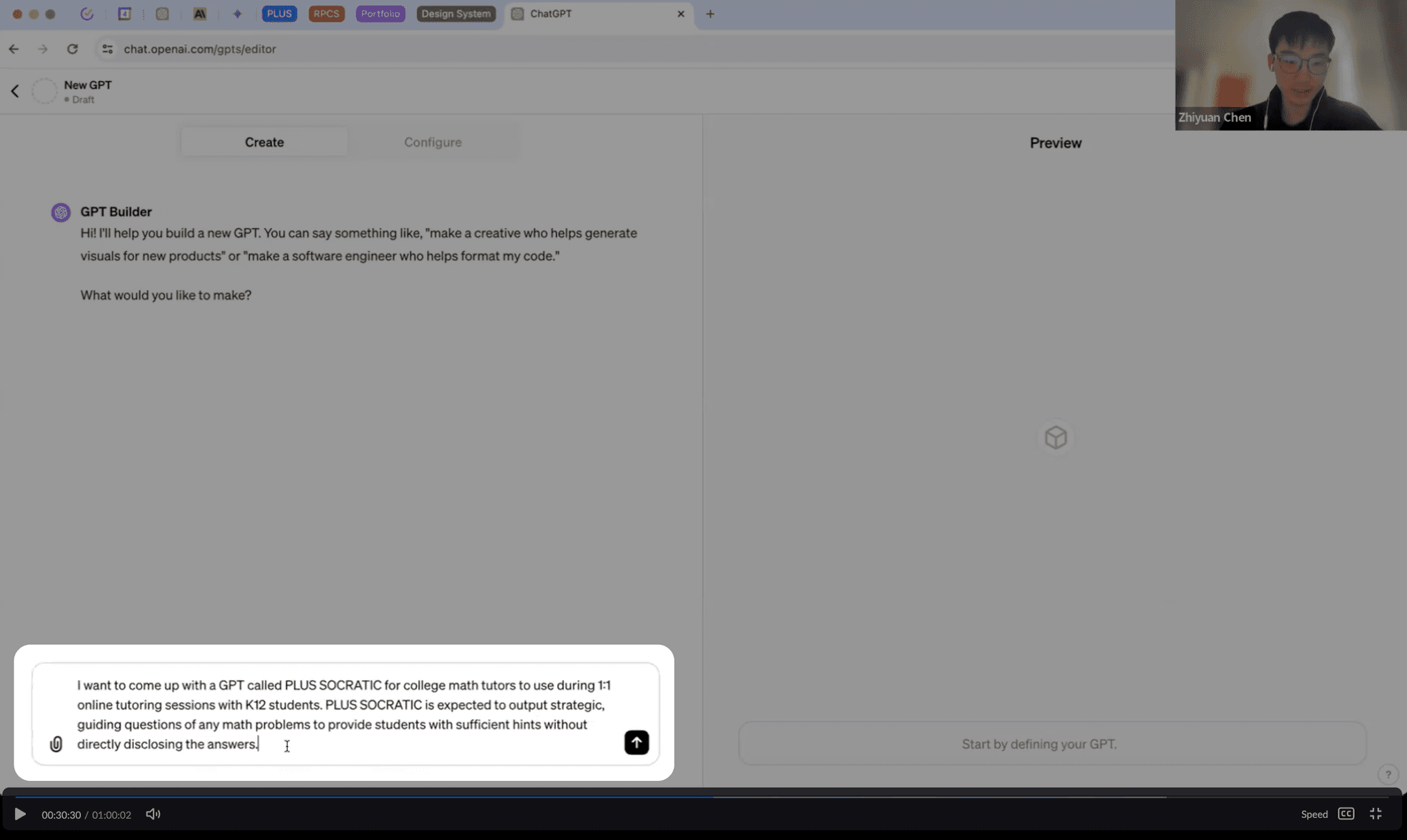

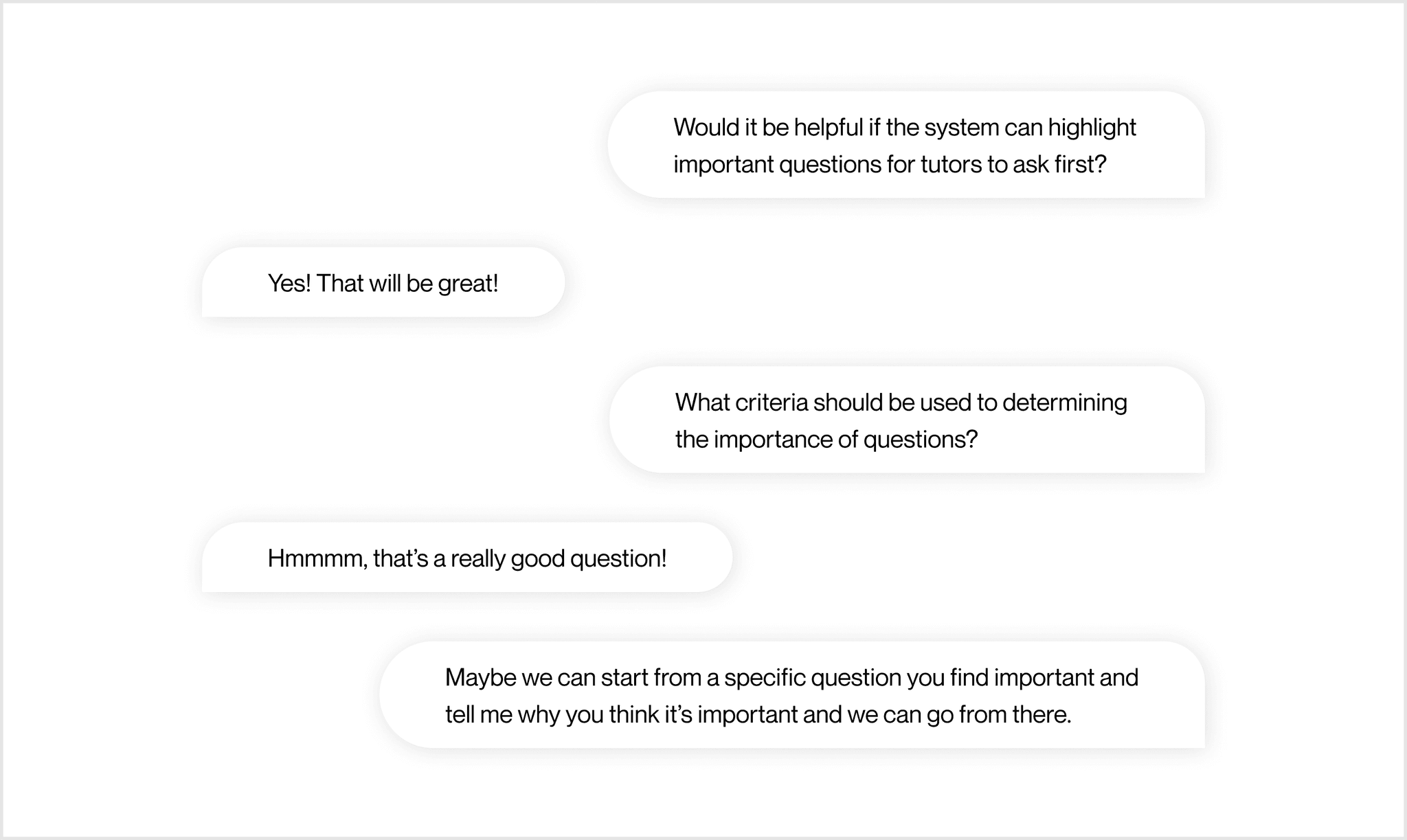

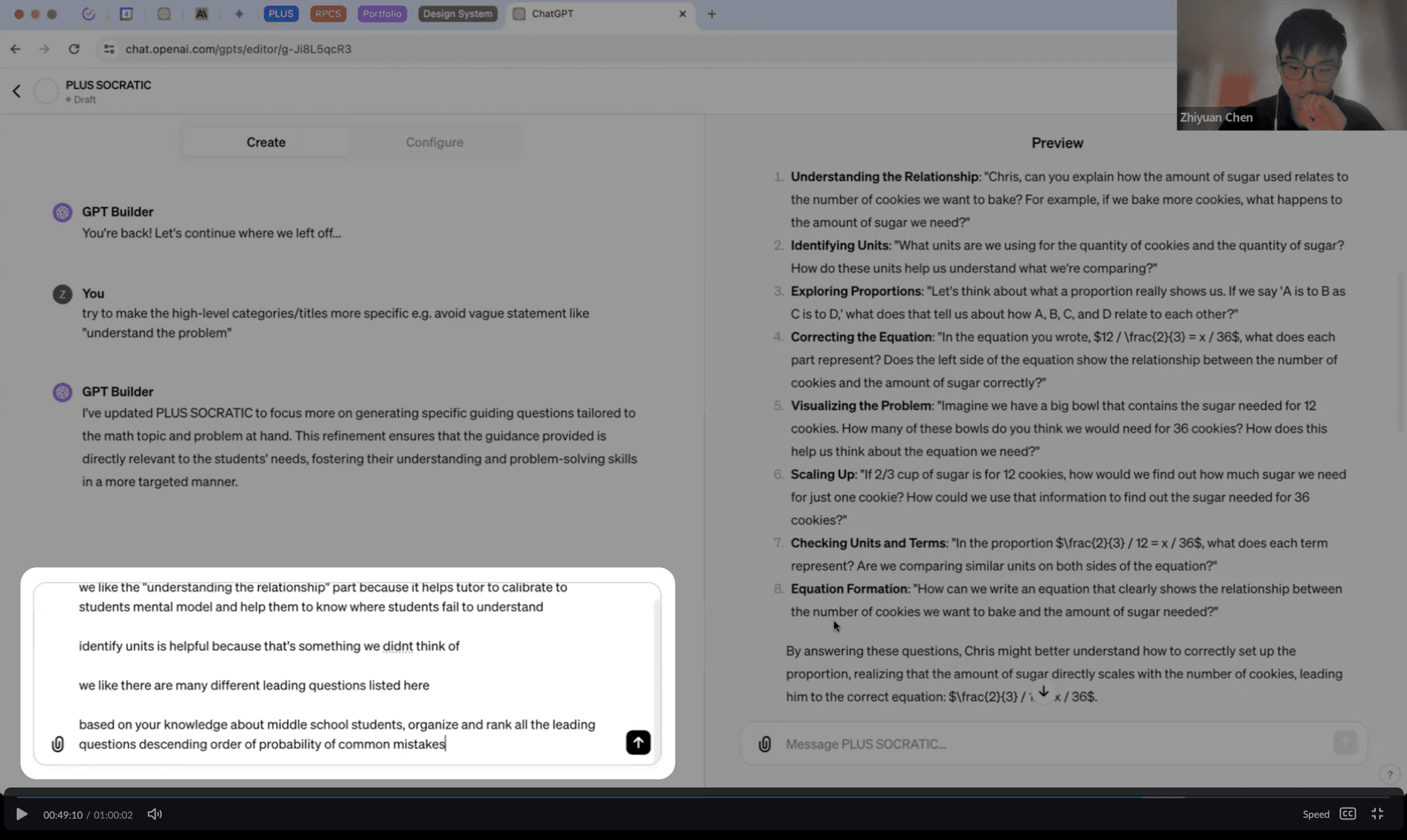

Co-create solutions for effective and desirable LLM output

Although the design direction is clear, we were unsure what kind of content output would be most helpful and meet tutors' needs. To develop a tool that's useful for them, we conducted 5 participatory design sessions, inviting tutors to build the co-pilot together.

Design Decisions

005

Model Iterations

006

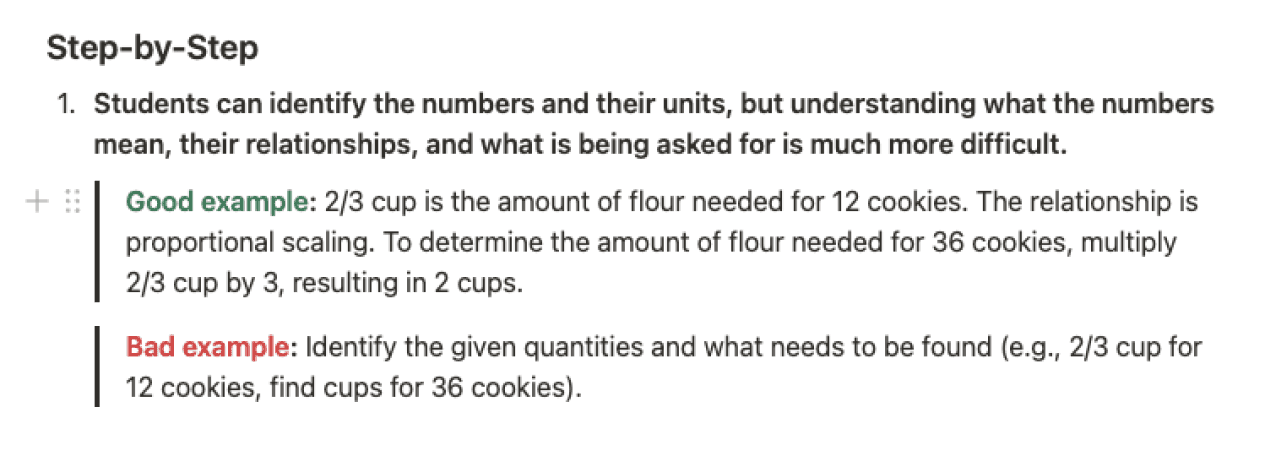

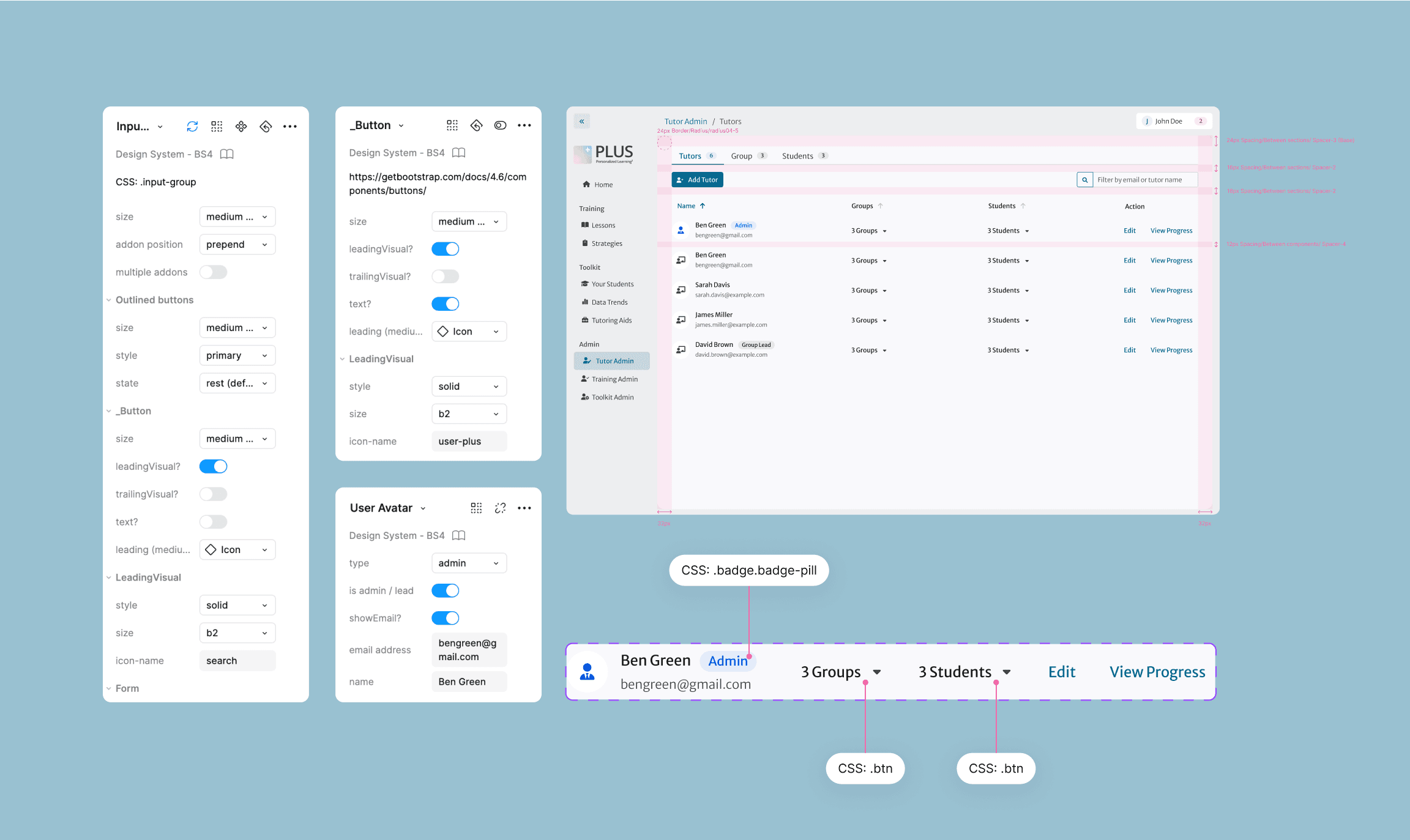

Transform SME feedback into clear action steps for ML engineer

To continuously enhance model output, I conducted two rounds of feedback collection from tutor supervisors. I synthesized this feedback into concrete and actionable steps for developers to iterate on.

Initial feedback, unstructured and wordy

Synthesized feedback, contextual, actionable and with examples

Reflection

007

If I had a chance to redo the project, I would…

Under the same constraints, I would revisit ideas tutors found highly relevant but not desirable, as this indicates we identified the right problems but didn’t propose the best solutions, leaving room for improvement.

If there were no constraints, I would…

I would explore additional AI use cases and focus on matchmaking how AI could effectively address tutors' needs, including those beyond the in-session phase of tutoring. While some opportunities were initially excluded as out of scope after research, there may be valuable roles for AI as a supportive tool that were previously overlooked.

I would consider integrating additional functionalities into the tool, as there were a few ideas closely ranked behind the two we eventually pursued, which could still provide significant value if implemented.

The next step is …

The next step involves gathering data and identifying opportunities to enhance existing features or introduce new ones. I would continue soliciting user feedback on model performance and work with ML engineers to iterate and improve. I would fly on the wall to observe tutors using the tool in real time, identifying anything that requires optimization. Lastly, I would revisit solutions initially excluded from the MVP and consider integrating them to expand functionality.